Was the COVID-19 Virus Designed? The Computer Doesn’t Know

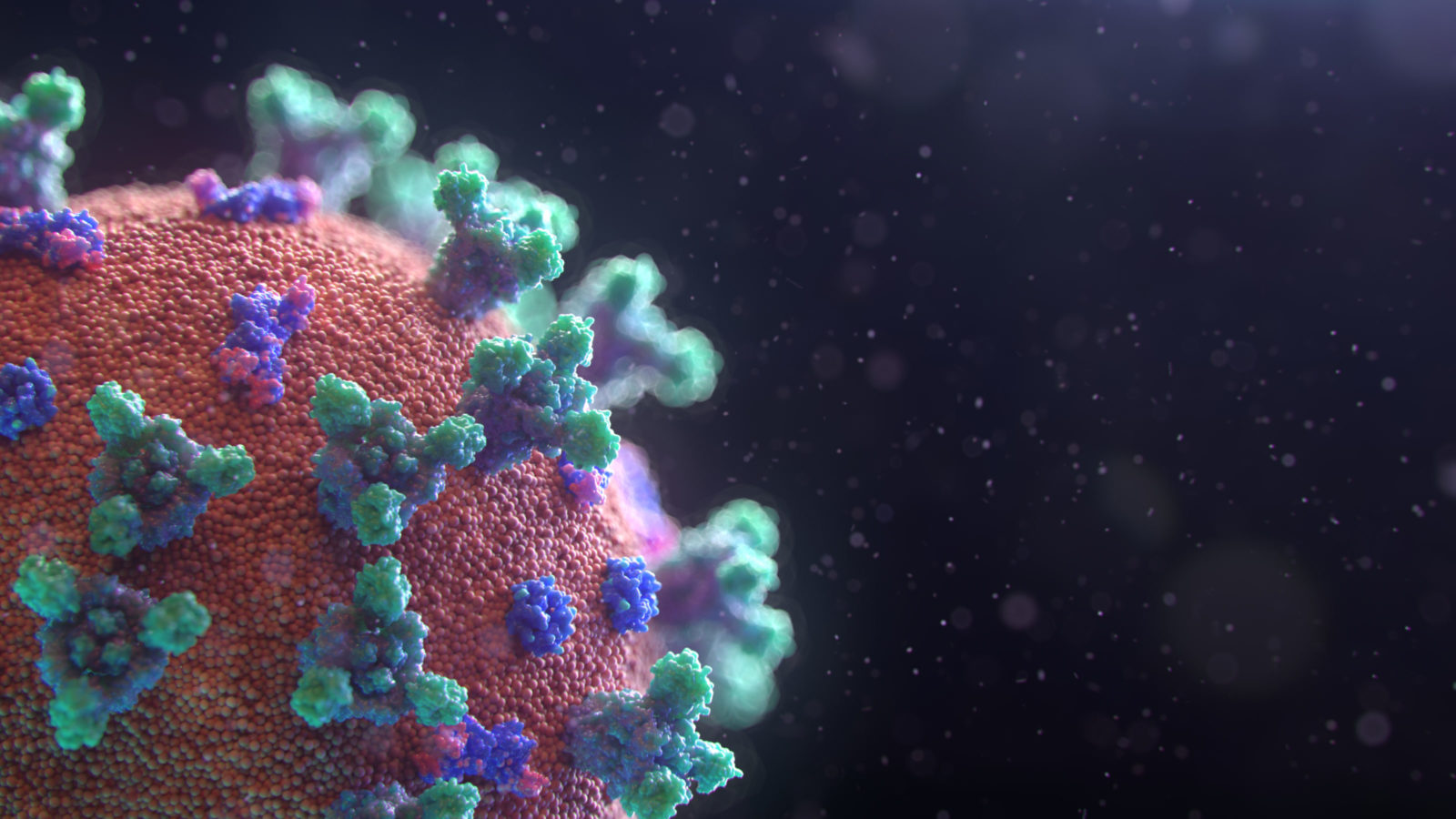

Some researchers confuse not finding a particular type of design with ruling out design Crossposted at Mind MattersThe world is awash with news and information on 2019-nCoV (also called SARS-CoV-2, or HCoV-19), the coronavirus responsible for the dreaded COVID-19, disease. Amid the wave, I read an open-access paper from Nature Medicine on the question of whether this virus was, as the paper states, “the product of purposeful manipulation.” In other words, was this virus designed by a human agent?

The authors discuss the part of the Coronavirus that attaches with very high affinity (very easily) to human ACE2 receptors. They point out that the current software that we use for predicting protein affinity would not have predicted this result. Therefore, they concludes, this part must have been the result of natural selection and not of design.

I am not a microbiologist, and cannot judge the methodology used to analyze questions of whether or not natural selection is capable of producing the high-affinity attachment points for human ACE2 receptors. So, for present purposes, I will simply take the paper’s conclusions as fact. Where I do have experience, however, is in the logic of design inferences.

The intelligent design community has spent quite a bit of time and effort examining the proper logical basis for a design inference. Unfortunately, most of the scientific community has missed the important lessons in design detection that can help them analyze the evidence for and against design in a more rigorous way.

The paper errs in a number of basic ways. First, the authors made the common mistake of assuming that ruling out one specific mechanism of design necessarily means that they have ruled out all possibilities of design. In this paper, the only design process considered was a computer analysis of likely binding targets and genetic engineering of the needed proteins, using software common in the United States. The authors did succeed in showing that that particular design hypothesis was false. Where they failed is when they generalized this result to all possible design hypotheses. Put another way, a human agent is not obligated to use the method they described and falsified.

This problem is not unique to them; it is a bad habit of the scientific community which stretches back into the 1800s. When constructing science experiments, you define a “null hypothesis” such that proving your null hypothesis false provides evidence for your actual hypothesis. However, this method relies on the null hypothesis being close to a complement of (similar in structure to to) the hypothesis being tested. Thus, the truth of the hypothesis you are testing should be at least largely entailed by the falsification of the null hypothesis.

Here is an example: If I wanted to test the hypothesis “water has weight,” I would establish the null hypothesis, “water has no weight,” and then show that this hypothesis is false. The two propositions “water has weight” and “water has no weight” are natural complements of each other.

When it comes to design, however, scientists have a bad habit of constructing null hypotheses which are decidedly not complements of the hypothesis they are trying to test. In this case, they set up one possible mode of design and tested it. They presumed that their other hypothesis, that there was no design, was logically entailed by their refutation of a particular design scenario. However, that isn’t the case.

If you need a practical example in this siutation, let me outline a few:

● A researcher could have access to an improved system for determining protein binding which is not publicly available (and therefore the authors of the paper are not aware of it).

● A researcher could use directed selection. Determining a sequence ahead of time and inserting it is not the only way to build proteins. Many proteins are made by random edits which are then screened. This method does not depend on any pre-determined model of protein interaction because the binding is determined experimentally, by trying out various models and choosing the one that works best.

● A researcher could use natural selection as a tool of design. The researcher would intentionally put humans in close proximity with animals with coronaviruses and wait until a mutation occurred which was selected for in a human so that the human gets the disease.

I have no reason to believe that any of these things happened. But they are possible and the paper did not address any of them. This is why their logic of design inference—it would only be design if a computer model favored it— was faulty when they ruled out design.

In fact, as the ID community has noted, in general, total exclusion of design is not possible. That fact is simply a natural outcome of the logic of design. A thing could be designed to “look natural.” The best we can do is establish that there is no evidence to justify an inference to design. That is decidedly not the same thing as evidence against design.

The reason for this is simple: Designing agents have powers not present in the “natural” world but they are also free to utilize anything that is present in the natural world. Therefore, the powers of agents form a superset of the powers of the natural world. Wind and dirt can’t do calculus but calculus professors certainly can blow dirt around.

So, what should the paper have said? How about: “We have investigated one particular design scenario and found it to be invalid.” The paper could have said that, “based on these results, we don’t find any justification for inferring design.” That’s certainly a valid statement. They err when they confuse failing to infer design with ruling out design. The two simply aren’t equivalent, and the ID movement has considerable research showing why that is the case. Perhaps if the authors, editors, or reviewers were aware of what ID theorists actually say, such mistakes would not be made.

Also by Jonathan Bartlett:

We will never go back to the pre-COVID-19 workplace The virus forced us to realize: Staying together apart has never been so easy

and

COVID-19: Do quarantine rules apply to mega-geniuses? How did Elon Musk, who has a cozy relationship with China, get his upscale car factory classified as an essential business during the pandemic? If we are going to hold some people up as business icons, why should it be those who—in the present COVID-19 troubles — have relations with China that necessarily raise questions.