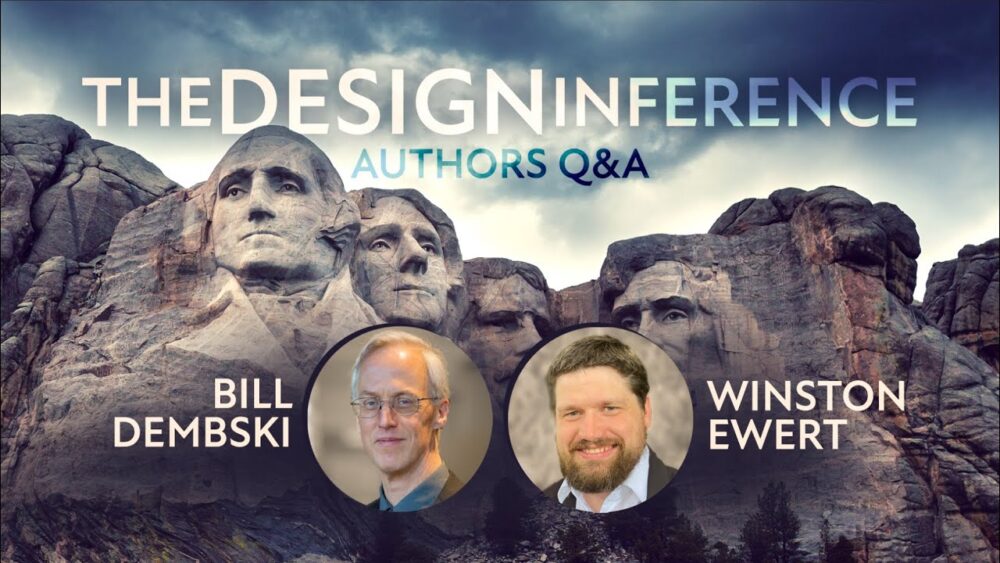

A noted mathematician and philosopher, William A. Dembski is a Founding and Senior Fellow with Discovery Institute’s Center for Science and Culture and a Distinguished Fellow with the Institute’s Walter Bradley Center for Natural and Artificial Intelligence. His most recent books relating to intelligent design include Being as Communion: A Metaphysics of Information (2014), Evolutionary Informatics (2017, co-authored with Robert Marks and Winston Ewert), and the second edition of The Design Inference (2023, co-authored with Winston Ewert).

Dr. Dembski has taught at Northwestern University, the University of Notre Dame, the University of Dallas, and Southwestern Seminary. He has done postdoctoral work in mathematics at MIT, in physics at the University of Chicago, and in computer science at Princeton University. Dr. Dembski was previously an Associate Research Professor in the Conceptual Foundations of Science at Baylor University, where he headed the first intelligent design think-tank at a major research university: The Michael Polanyi Center.

Dr. Dembski is a graduate of the University of Illinois at Chicago, where he earned a bachelor’s in psychology and a doctorate in philosophy. He also received a doctorate in mathematics from the University of Chicago in 1988 and a master of divinity degree from Princeton Theological Seminary in 1996. He has held National Science Foundation graduate and postdoctoral fellowships.

Dr. Dembski has published in the peer-reviewed mathematics, engineering, biology, philosophy, and theology literature. He is the author/editor of more than 25 books. In The Design Inference: Eliminating Chance Through Small Probabilities (Cambridge University Press, 1998), he examined the design argument in a post-Darwinian context and analyzed the connections linking chance, probability, and intelligent causation. The greatly expanded second edition of that book also critiques naturalistic accounts of evolution.

Dr. Dembski has edited several influential anthologies, including The Comprehensive Guide to Science and Faith (Harvest, 2021, co-edited with Casey Luskin and Joseph Holden), Biological Information: New Perspectives (World Scientific, 2013, co-edited with Robert Marks, Michael Behe, Bruce Gordon, and John Sanford), The Nature of Nature: Examining the Role of Naturalism in Science (ISI, 2011, co-edited with Bruce Gordon), Uncommon Dissent: Intellectuals Who Find Darwinism Unconvincing (ISI, 2004) and Debating Design: From Darwin to DNA (Cambridge University Press, 2004, co-edited with Michael Ruse).

As interest in intelligent design has grown in the wider culture, Dr. Dembski has assumed the role of public intellectual. In addition to lecturing around the world at colleges and universities, he has appeared on radio and television. His work has been cited in newspaper and magazine articles, including three front page stories in the New York Times as well as the August 15, 2005 Time magazine cover story on intelligent design. He has appeared on the BBC, NPR (Diane Rehm, etc.), PBS (Inside the Law with Jack Ford; Uncommon Knowledge with Peter Robinson), CSPAN2, CNN, Fox News, ABC Nightline, and The Daily Show with Jon Stewart.

Archives

Orwell’s Cold Dystopia is Closer Than We Think

When we speak lies as truth, tyrants come marching in

The War on 2 + 2 = 4

What’s the Relation Between Intelligence and Information?

The fundamental intuition of information as narrowing down possibilities matches up neatly with the concept of intelligence

The Connection Between Intelligence and Information

Can We Trust Large Language Models? Depends on How Truthful They Are

Just because a piece of tech is highly sophisticated doesn't mean it's more trustworthy

Truth and Trust in Large Language Models

My Dinner with Steven and Louise Weinberg

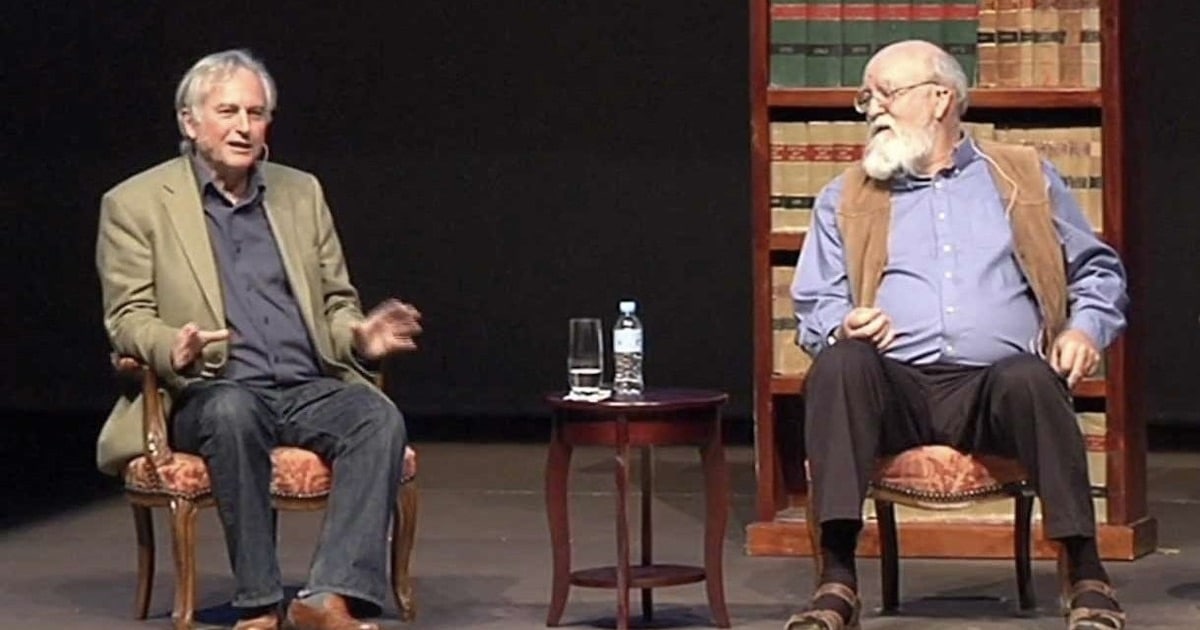

Dawkins, Dennett, and the Taste for Iconoclasm

Debunking the Hype of Artificial General Intelligence

Webinar with Bill Dembski and Winston Ewert on The Design Inference

Orgelian Specified Complexity

Specified Complexity and a Tale of Ten Malibus

Specified Complexity as a Unified Information Measure

Shannon and Kolmogorov Information

Intuitive Specified Complexity: A User-Friendly Account

Specified Complexity Made Simple: The Historical Backdrop

The Primacy of Information Over Matter

The Primacy of Information Over Matter